As we’ve reported many times, communities across the US are increasingly pushing back against the explosive growth of AI-driven data centers. Major concerns include skyrocketing electricity demand, massive water consumption for cooling, noise pollution from giant fans, loss of prime agricultural and residential land, and rising utility bills passed on to local residents. As of May 2026, independent trackers report approximately 69–78 U.S. jurisdictions that have enacted bans, restrictions, or moratoriums on new data centers. Many of these measures also target the new high-voltage transmission lines required to power them.

This wave of resistance highlights a deepening tension between the rapid expansion of AI infrastructure and local priorities around quality of life, sustainability, and community control.

1. Michigan: The Epicenter of Local Moratoriums

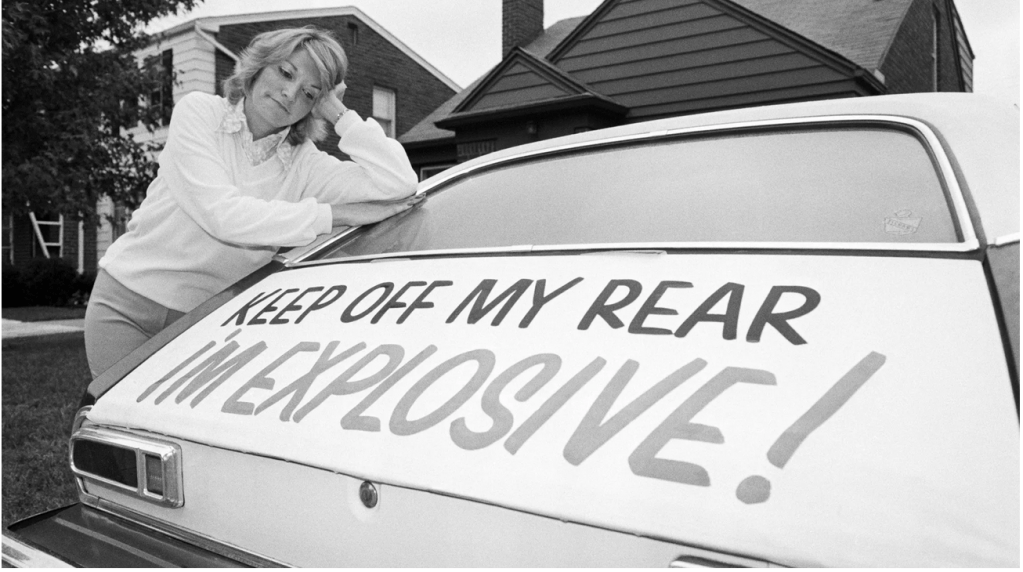

I think you could safely say that Michigan currently leads the nation in local opposition to data center construction, largely triggered by the controversial $16+ billion OpenAI-Oracle Stargate AI data center project in Saline Township, Washtenaw County. Despite a 4-1 township planning commission vote against rezoning and strong resident protests, the Stargate construction project advanced through legal channels, igniting widespread defensive actions across the state.

- More than 50 communities (cities and townships) have enacted temporary moratoriums, covering roughly 1,500 square miles — an area comparable to the size of Rhode Island.

- Between 25 and 51 active local moratoriums are in place as of early 2026.

- State lawmakers have introduced bills (HB 5594–5596) calling for a one-year statewide pause on new hyperscale data centers, along with stricter rules on water and electricity connections.

- Some utilities, such as Ypsilanti, have imposed their own 12-month bans on water hookups for large AI facilities—but that will eventually expire.

Key issues in Michigan should sound familiar: massive water usage, strain on the electrical grid, and the loss of local zoning authority.

2. Virginia: Transmission Line Battles in “Data Center Alley”

Virginia is home to the highest concentration of data centers in the United States (over 550 facilities), particularly in Northern Virginia. Opposition here focuses heavily on both the data centers and the extensive transmission lines needed to support them.

- Strong protests in Loudoun, Prince William, Hanover, and other counties against new projects and expansions.

- Major conflicts over high-voltage lines such as the Valley Link and Joshua Falls projects, which cross multiple counties and impact neighborhoods, historic sites, and conserved rural land.

- Dominion Energy has faced repeated legal and community challenges regarding route selections.

- Legislative debates continue over ending billions in tax incentives and studies projecting residential electricity rate increases of up to $37 per month by 2040.

3. Georgia: Statewide Pause Efforts Amid High Project Volume

Georgia has seen hundreds of announced data center projects, prompting both local and statewide responses.

- Bills such as HB 1059 and HB 1012 propose temporary statewide pauses on new permitting (potentially until 2027–2028) to allow time for impact studies.

- Several counties, including DeKalb and Camden, have passed moratoriums ranging from several months to a year while updating zoning ordinances.

- Residents voice concerns about energy costs, water consumption, loss of land, and whether tax incentives truly benefit local communities.

Georgia’s combination of legislative proposals and county-level actions reflects growing resistance in a rapidly developing market.

4. North Carolina: Rising Local and Policy Pushback

North Carolina ranks among the top states for new moratorium activity as data center developers expand beyond traditional East Coast hubs.

- Multiple counties and municipalities have passed restrictions or temporary moratoriums citing infrastructure strain, zoning issues, and community impacts.

- Policy proposals such as HB 1063 seek to require hyperscale developers to fully cover the costs of power, water, and grid upgrades rather than passing them to ratepayers.

- Growing focus on the environmental and visual effects of both data centers and supporting transmission lines.

North Carolina represents an emerging hotspot where early local actions may shape future statewide policy.

5. Indiana: County-Level Resistance and High-Stakes Conflicts

Indiana has seen intense localized opposition, particularly in rural counties.

- Counties such as White and Fulton have enacted 6-to-12-month moratoriums to study impacts and strengthen local ordinances.

- Trackers show at least 6 formal actions, with several others in discussion.

- Primary concerns include the conversion of prime agricultural land, rising utility rates, and the industrialization of rural communities.

Indiana illustrates how even mid-sized proposals can trigger strong community responses and political tension.

Broader Implications and the Path Forward

The five most active states — Michigan, Virginia, Georgia, North Carolina, and Indiana — capture the national picture. Resistance is bipartisan, spans urban and rural areas, and increasingly includes opposition to the massive transmission lines that accompany data center projects.

Common themes include fears that data centers consume disproportionate amounts of power and water while shifting costs onto existing residents. Proponents argue these facilities bring jobs, tax revenue, and are essential for America’s AI competitiveness. Critics insist that growth must be responsible, with full cost recovery, better siting practices, efficiency standards, and genuine community input.

As AI demand continues to surge, this local “revolt” tests whether the physical infrastructure can scale fast enough without compromising quality of life and environmental goals. I think the national consensus is a big no.

Expect more moratoriums, ballot initiatives, legal battles, and negotiations in the coming months. The outcome will significantly influence not only the future of AI but also national energy policy and land-use planning for years to come.

You must be logged in to post a comment.