I believe Americans should have the ability to defend their human data, and their rights to that data, against the largest copyright theft in the history of the world.

Millions of Americans have spent the past two decades speaking and engaging online. Many of you here today have online profiles and writings and creative productions that you care deeply about. And rightly so. It’s your work. It’s you.

What if I told you that AI models have already been trained on enough copyrighted works to fill the Library of Congress 22 times over? For me, that makes it very simple: We need a legal mechanism that allows Americans to freely defend those creations. I say let’s empower human beings by protecting the very human data they create. Assign property rights to specific forms of data, create legal liability for the companies who use that data and, finally, fully repeal Section 230. Open the courtroom doors. Let the people sue those who take their rights, including those who do it using AI.

Third, we must add sensible guardrails to the emergent AI economy and hold concentrated economic power to account. These giant companies have made no secret of their ambitions to radically reshape our economic life. So, we ought to require transparency and reporting each time they replace a working man with a machine.

And the government should inspect all of these frontier AI systems, so we can better understand what the tech titans plan to build and deploy.

Ultimately, when it comes to guardrails, protecting our children should be our lodestar. You may have seen recently how Meta green-lit its own chatbots to have sensual conversations with children—yes, you heard me right. Meta’s own internal documents permitted lurid conversations that no parent would ever contemplate. And most tragically, ChatGPT recently encouraged a troubled teenager to commit suicide—even providing detailed instructions on how to do it.

We absolutely must require and enforce rigorous technical standards to bar inappropriate or harmful interactions with minors. And we should think seriously about age verification for chatbots and agents. We don’t let kids drive or drink or do a thousand other harmful things. The same standards should apply to AI.

Fourth and finally, while Congress gets its act together to do all of this, we can’t kneecap our state governments from moving first. Some of you may have seen that there was a major effort in Congress to ban states from regulating AI for 10 years—and a whole decade is an eternity when it comes to AI development and deployment. This terrible policy was nearly adopted in the reconciliation bill this summer, and it could have thrown out strong anti-porn and child online safety laws, to name a few. Think about that: conservatives out to destroy the very concept of federalism that they cherish … all in the name of Big Tech. Well, we killed it on the Senate floor. And we ought to make sure that bad idea stays dead.

We’ve faced technological disruption before—and we’ve acted to make technology serve us, the people. Powered flight changed travel forever, but you can’t land a plane on your driveway. Splitting the atom fundamentally changed our view of physics, but nobody expects to run a personal reactor in their basement. The internet completely recast communication and media, but YouTube will still take down your video if you violate a copyright. By the same token, we can—and we should—demand that AI empower Americans, not destroy their rights . . . or their jobs . . . or their lives.

Don’t forget tomorrow—Artist Rights Roundtable on AI and Copyright at American University in Washington DC

Artist Rights Roundtable on AI and Copyright:

Coffee with Humans and the Machines

Join the Artist Rights Institute (ARI) and American University’s Kogod’s Entertainment Business Program for a timely morning roundtable on AI and copyright from the artist’s perspective. We’ll explore how emerging artificial intelligence technologies challenge authorship, licensing, and the creative economy — and what courts, lawmakers, and creators are doing in response.

This roundtable is particularly timely because both the Bartz and Kadrey rulings expose gaps in author consent, provenance, and fair licensing, underscoring an urgent need for policy, identifiers, and enforceable frameworks to protect creators.

🗓️ Date: September 18, 2025

🕗 Time: 8:00 a.m. – 12:00 noon

📍 Location: Butler Board Room, Bender Arena, American University, 4400 Massachusetts Ave NW, Washington D.C. 20016

🎟️ Admission: Free and open to the public. Registration required at Eventbrite. Seating is limited.

🅿️ Parking map is available here. Pay-As-You-Go parking is available in hourly or daily increments ($2/hour, or $16/day) using the pay stations in the elevator lobbies of Katzen Arts Center, East Campus Surface Lot, the Spring Valley Building, Washington College of Law, and the School of International Service

Hosted by the Artist Rights Institute & American University’s Kogod School of Business, Entertainment Business Program

🔹 Overview:

☕ Coffee served starting at 8:00 a.m.

🧠 Program begins at 8:50 a.m.

🕛 Concludes by 12:00 noon — you’ll be free to have lunch with your clone.

🗂️ Program:

8:00–8:50 a.m. – Registration and Coffee

8:50–9:00 a.m. – Introductory Remarks by KOGOD Dean David Marchick and ARI Director Chris Castle

9:00–10:00 a.m. – Topic 1: AI Provenance Is the Cornerstone of Legitimate AI Licensing:

Speakers:

- Dr. Moiya McTier, Senior Advisor, Human Artistry Campaign

- Ryan Lehnning, Assistant General Counsel, International at SoundExchange

- The Chatbot

Moderator: Chris Castle, Artist Rights Institute

10:10–10:30 a.m. – Briefing: Current AI Litigation

- Speaker: Kevin Madigan, Senior Vice President, Policy and Government Affairs, Copyright Alliance

10:30–11:30 a.m. – Topic 2: Ask the AI: Can Integrity and Innovation Survive Without Artist Consent?

Speakers:

- Erin McAnally, Executive Director, Songwriters of North America

- Jen Jacobsen, Executive Director, Artist Rights Alliance

- Josh Hurvitz, Partner, NVG and Head of Advocacy for A2IM

- Kevin Amer, Chief Legal Officer, The Authors Guild

Moderator: Linda Bloss-Baum, Director, Business and Entertainment Program, KOGOD School of Business

11:40–12:00 p.m. – Briefing: US and International AI Legislation

- Speaker: George York, SVP, International Policy Recording Industry Association of America

🎟️ Admission:

Free and open to the public. Registration required at Eventbrite. Seating is limited.

🔗 Stay Updated:

Watch this space and visit Eventbrite for updates and speaker announcements.

Why Artists Are Striking Spotify Over Daniel Ek’s AI-Offensive Weapons Bet—and Why It Matters for AI Deals

Over the summer, a growing group of artists began pulling their catalogs from Spotify—not over miserable and Dickensian-level royalties alone, but over Spotify CEO Daniel Ek’s vast investment in Helsing, a European weapons company. Helsing builds AI-enabled offensive weapons systems that skirt international human rights law, specifically Article 36 of the Geneva Conventions. Deerhoof helped kick off the current wave; other artists (including Xiu Xiu, King Gizzard & the Lizard Wizard, Hotline TNT, The Mynabirds, WU LYF, Kadhja Bonet, and Young Widows) have followed or announced plans to do so.

What is Helsing—and what does it build?

Helsing is a Munich-based defense-tech firm founded in 2021. It began with AI software for perception, decision-support, and electronic warfare, and has expanded into hardware. The company markets the HX‑2 “AI strike drone,” described as a software‑defined loitering munition intended to engage artillery and armored targets at significant range—and kill people. It emphasizes resilience to electronic warfare, swarm/networked tactics via its Altra recon‑strike platform, and a human in/on the loop for critical decisions, and that limited role for humans in killing other humans is where it runs into Geneva Convention issues. Trust me, they know this.

Beyond drones, Helsing provides AI electronic‑warfare upgrades for Germany’s Eurofighter EK (with Saab), and has been contracted to supply AI software for Europe’s Future Combat Air System (FCAS). Public briefings and reporting indicate an active role supporting Ukraine since 2022, and a growing UK footprint linked to defense modernization initiatives. In 2025, Ek’s investment firm led a major funding round that valued Helsing in the multibillion‑euro range alongside contracts in the UK, Germany, and Sweden.

So let’s be clear—Helsing is not making some super tourniquet or AI medical device that has a dual use in civilian and military applications. This is Masters of War stuff. Which, for Mr. Ek’s benefit, is a song.

Why artists care

For these artists, the issue isn’t abstract: they see a direct line between Spotify‑generated wealth and AI‑enabled lethality, especially as Helsing moves from software into weaponized autonomy at scale. That ethical conflict is why exit statements explicitly connect Dickensian streaming economics and streamshare thresholds to military investment choices. In fact, it remains to be seen whether Spotify itself is using its AI products and the tech and data behind them for Helsing’s weapons applications.

How many artists have left?

There’s no official tally. Reporting describes a wave of departures and names specific acts. The list continues to evolve as more artists reassess their positions.

The financial impact—on Spotify vs. on artists

For Spotify, a handful of indie exits barely moves the needle. The reason is the pro‑rata or “streamshare” payout model: each rightsholder’s share is proportional to total streams, not a fixed per‑stream rate except if you’re “lucky” enough to get a “greater of” formula. Remove a small catalog and its share simply reallocates to others. For artists, leaving can be meaningful—some replace streams with direct sales (Bandcamp, vinyl, fan campaigns) and often report higher revenue per fan. But at platform scale, the macro‑economics barely budge.

Of course because of Spotify’s tying relationships with talent buyers for venues (explicit or implicit) not being on Spotify can be the kiss of death for a new artist competing for a Wednesday night at a local venue when the venue checks your Spotify stats.

Why this is a cautionary tale for AI labs

Two practices make artist exits feel symbolically loud but structurally quiet—and they’re exactly what frontier AI should avoid:

1) Revenue‑share pools with opaque rules. Pro‑rata “streamshare” pushes smaller players toward zero; any exit just enriches whoever remains. AI platforms contemplating rev‑share training or retrieval deals should learn from this: user‑centric or usage‑metered deals with transparent accounting are more legible than giant, shifting pools.

2) NDA‑sealed terms. The streaming era normalized NDAs that bury rates and conditions. If AI deals copy that playbook—confidential blacklists, secret style‑prompt fees, unpublished audit rights—contributors will see protest as the only lever. Transparency beats backlash.

3) Weapons Related Use Cases for AI. We all know that the frontier labs like Google, Amazon, Microsoft and others are all also competing like trained seals for contracts from the Department of War. They use the same technology trained on culture ripped off from artists to kill people for money.

A clearer picture of Helsing’s products and customers

• HX‑2 AI Strike Drone: beyond‑line‑of‑sight strike profile, on‑board target re‑identification, EW‑resilient, swarm‑capable via Altra; multiple payload options; human in/on the loop.

• Eurofighter EK (Germany): with Saab, AI‑enabled electronic‑warfare upgrade for Luftwaffe Eurofighters oriented to SEAD/DEAD roles.

• FCAS AI Backbone (Europe): software/AI layer for the next‑generation air combat system under European procurement.

• UK footprint: framework contracting in the UK defense ecosystem, tied to strike/targeting modernization efforts.

• Ukraine: public reporting indicates delivery of strike drones; company statements reference activity supporting Ukraine since 2022.

The bigger cultural point

Whether you applaud or oppose war tech, the ethical through‑line in these protests is consistent: creators don’t want their work—or the wealth it generates—financing AI (especially autonomous) weaponry. Because the platform’s pro‑rata economics make individual exits financially quiet, the conflict migrates into public signaling and brand pressure.

What would a better model look like for AI?

• Opt‑in, auditable deals for creative inputs to AI models (training and RAG) with clear unit economics and published baseline terms.

• User‑centric or usage‑metered payouts (by contributor, by model, by retrieval) instead of a single, shifting revenue pool.

• Public registries and audit logs so participants can verify where money comes from and where it goes.

• No gag clauses on baseline rates or audit rights.

The strike against Spotify is about values as much as value. Ek’s bet on Helsing—drones, electronic warfare, autonomous weapons—makes those values impossible for some artists to ignore. Thanks to the pro‑rata royalty machine, the exits won’t dent Spotify’s bottom line—but they should warn AI platforms against repeating the same opaque rev‑shares and NDAs that leave creators feeling voiceless in streaming.

If you got one of these emails from Spotify, you might be interested

Spotify failed to consult any of the people who drive fans to the data abattoir: the musicians, artists, podcasters and authors.

Spotify has quietly tightened the screws on AI this summer—while simultaneously clarifying how it uses your data to power its own machine‑learning features. For artists, rightsholders, developers, and policy folks, the combination matters: Spotify is making it harder for outsiders to train models on Spotify data, even as it codifies its own first‑party uses like AI DJ and personalized playlists.

Spotify is drawing a bright line: no training models on Spotify; yes to Spotify training its own. If you’re an artist or developer, that means stronger contractual leverage against third‑party scrapers—but also a need to sharpen your own data‑governance and licensing posture. Expect other platforms in music and podcasting to follow suit—and for regulators to ask tougher questions about how platform ML features are audited, licensed, and accounted for.

Below is a plain‑English (hopefully) breakdown of what changed, what’s new or newly explicit, and the practical implications for different stakeholders.

Explicit ban on using Spotify to train AI models (third parties).

Spotify’s User Guidelines now flatly prohibit “crawling” or “scraping” the service and, crucially, “using any part of the Services or Content to train a machine learning or AI model.” That’s a categorical no for bots and bulk data slurps. The Developer Policy mirrors this: apps using the Web API may not “use the Spotify Platform or any Spotify Content to train a machine learning or AI model.” In short: if your product ingests Spotify data, you’re in violation of the rules and risk enforcement and access revocation.

Spotify’s own AI/ML uses are clearer—and broad.

The Privacy Policy (effective August 27, 2025) spells out that Spotify uses personal data to “develop and train” algorithmic and machine‑learning models to improve recommendations, build AI features (like AI DJ and AI playlists), and enforce rules. That legal basis is framed largely as Spotify’s “legitimate interests.” Translation: your usage, voice, and other data can feed Spotify’s own models.

The user content license is very broad.

If you post “User Content” (messages, playlist titles, descriptions, images, comments, etc.), you grant Spotify a worldwide, sublicensable, transferable, royalty‑free, irrevocable license to reproduce, modify, create derivative works from, distribute, perform, and display that content in any medium. That’s standard platform drafting these days, but the scope—including derivative works—has AI‑era consequences for anything you upload to or create within Spotify’s ecosystem (e.g., playlist titles, cover images, comments).

Anti‑manipulation and anti‑automation rules are baked in.

The User Guidelines and Developer Policy double down on bans against bots, artificial streaming, and traffic manipulation. If you’re building tools that touch the Spotify graph, treat “no automated collection, no metric‑gaming, no derived profiling” as table stakes—or risk enforcement, up to termination of access.

Data‑sharing signals to rightsholders continue.

Spotify says it can provide pseudonymized listening data to rightsholders under existing deals. That’s not new, but in the ML context it underscores why parallel data flows to third parties are tightly controlled: Spotify wants to be the gateway for data, not the faucet you can plumb yourself.

What this means by role:

• Artists & labels: The AI‑training ban gives you a clear contractual hook against services that scrape Spotify to build recommenders, clones, or vocal/style models. Document violations (timestamps, IPs, payloads) and send notices citing the User Guidelines and Developer Policy. Meanwhile, assume your own usage and voice interactions can be used to improve Spotify’s models—something to consider for privacy reviews and internal policies.

• Publishers and collecting societies: The combination of “no third‑party training” + “first‑party ML training” is a policy trend to watch across platforms. It raises familiar questions about derivative data, model outputs, and whether platform machine learning features create new accounting categories—or require new audit rights—in future licenses.

• Policymakers: Read this as another brick in the “closed data/open model risk” wall. Platforms restrict external extraction while expanding internal model claims. That asymmetry will shape future debates over data‑access mandates, competition remedies, and model‑audit rights—especially where platform ML features may substitute for third‑party discovery tools.

Practical to‑dos

1) For rights owners: Add explicit “no platform‑sourced training” language in your vendor, distributor, or analytics contracts. Track and log known scrapers and third‑party tools that might be training off Spotify. Consider notice letters that cite the specific clauses.

2) For privacy and legal teams: Update DPIAs and data maps. Spotify’s Privacy Policy identifies “User Data,” “Usage Data,” “Voice Data,” “Message Data,” and more as inputs for ML features under legitimate interest. If you rely on Spotify data for compliance reports, make sure you’re only using permitted, properly aggregated outputs—not raw exports.

3) For users: I will be posting a guideline to how to clawback your data. I may not hit everything so always open to suggestions about whatever else that others spot.

Spotify’s terms give it very broad rights to collect, combine, and use your data (listening history, device/ads data, voice features, third-party signals) for personalization, ads, and product R&D. They also take a broad license to user content you upload (e.g., playlist art).

Key cites

• User Guidelines: prohibition on scraping and on “using any part of the Services or Content to train a machine learning or AI model.”

• Developer Policy (effective May 15, 2025): “Do not use the Spotify Platform or any Spotify Content to train a machine learning or AI model…” Also bans analyzing Spotify content to create new/derived listenership metrics or user profiles for ad targeting.

• Privacy Policy (effective Aug. 27, 2025): Spotify uses personal data to “develop and train” ML models for recommendations, AI DJ/AI playlists, and rule‑enforcement, primarily under “legitimate interests.”

• Terms & Conditions of Use: very broad license to Spotify for any “User Content” you post, including the right to “create derivative works” and to use content by any means and media worldwide, irrevocably.

[A version of this post first appeared on MusicTechPolicy]

Waterloo Records remastered: Iconic vinyl shop celebrates grand re-opening

We could not be happier for our friends at Waterloo Records in Austin on their reopening down the street. Read about it here. Support your local record store!

The famous record shop, which for decades sat at the corner of West Sixth Street and North Lamar Boulevard, finally lowered the needle on its new location Saturday; the soundtrack a mashup of excited shoppers, intermittent announcements about prizes and giveaways, and, of course, music.

Waterloo’s grand re-opening party marked the culmination of months of collaboration and planning among former majority owner John T. Kunz and new co-owners and operators Caren Kelleher and Trey Watson.

@johnpgatta Interviews @davidclowery in Jambands

David Lowery sits down with John Patrick Gatta at Jambands for a wide-ranging conversation that threads 40 years of Camper Van Beethoven and Cracker through the stories behind David’s 3 disc release Fathers, Sons and Brothers and how artists survive the modern music economy. Songwriter rights, road-tested bands, or why records still matter. Read it here.

David Lowery toured this year with a mix of shows celebrating the 40th anniversary of Camper Van Beethoven’s debut, Telephone Free Landslide Victory, duo and band gigs with Cracker, as well as solo dates promoting his recently-released Fathers, Sons and Brothers.

Fathers, the 28-track musical memoir of Lowery’s personal life explored childhood memories, drugs at Disneyland and broken relationships. Of course, it tackles his lengthy career as an indie and major label artist who catalog highlights include the alt-rock classic “Take the Skinheads Bowling” and commercial breakthrough of “Teen Angst” and “Low.” The album works as a selection of songs that encapsulate much of his musical history— folk, country and rock—as well as an illuminating narrative that relates the ups, downs, tenacity, reflection and resolve of more than four decades as a musician.

Artist Rights Institute and Abby North Press Copyright Office for Accountability in MLC Redesignation

On August 22, 2025, the Artist Rights Institute, together with music publisher Abby North, filed joint comments with the U.S. Copyright Office as part of the agency’s ongoing five-year redesignation review of the Mechanical Licensing Collective (MLC). The comments memorialize an ex parte meeting with senior Copyright Office attorneys and stress that this redesignation process must not become a perfunctory exercise. Instead, it should serve as a meaningful opportunity to hold the MLC accountable for its statutory obligations.

The filing underscores that Congress deliberately gave the Copyright Office broad regulatory oversight because the MLC was established as an experiment under the Music Modernization Act. After five years, the evidence points to serious deficiencies: continuing metadata errors, lack of access to bulk matching tools for rightsholders, opaque governance decisions, and unresolved questions about audits and litigation. Most strikingly, the MLC’s unilateral investment of unmatched royalties in the securities markets—totaling more than $1.2 billion on the MLC’s latest tax return—raises concerns about statutory authority and fiduciary duties.

The joint comments argue that interest on unmatched royalties was intended by Congress as a penalty on licensees for failure to timely match and pay, not as a windfall for the MLC itself. By adopting an unauthorized investment policy, the MLC risks stepping outside its mandate, exposing its officers and directors to fiduciary liability.

To restore transparency and trust, the Institute and Ms. North propose clear regulatory reforms: conditional redesignation tied to performance benchmarks, publication of vendor match rates, real-time disclosure of governance actions, clarified metadata responsibilities, and monthly reporting of investment holdings. These reforms would align the U.S. system with international best practices and protect songwriters whose livelihoods depend on fair and accurate royalty distributions.

Read the full joint comments below.

9/18/25: Save the Date! @ArtistRights Institute and American University Kogod School to host Artist Rights Roundtable on AI and Copyright Sept. 18 in Washington, DC

🎙️ Artist Rights Roundtable on AI and Copyright: Coffee with Humans and the Machines

📍 Butler Board Room, Bender Arena, American University, 4400 Massachusetts Ave NW, Washington D.C. 20016 | 🗓️ September 18, 2025 | 🕗 8:00 a.m. – 12:00 noon

Hosted by the Artist Rights Institute & American University’s Kogod School of Business, Entertainment Business Program

🔹 Overview:

Join the Artist Rights Institute (ARI) and Kogod’s Entertainment Business Program for a timely morning roundtable on AI and copyright from the artist’s perspective. We’ll explore how emerging artificial intelligence technologies challenge authorship, licensing, and the creative economy — and what courts, lawmakers, and creators are doing in response.

☕ Coffee served starting at 8:00 a.m.

🧠 Program begins at 8:50 a.m.

🕛 Concludes by 12:00 noon — you’ll be free to have lunch with your clone.

🗂️ Program:

8:00–8:50 a.m. – Registration and Coffee

8:50–9:00 a.m. – Introductory Remarks by Dean David Marchick and ARI Director Chris Castle

9:00–10:00 a.m. – Topic 1: AI Provenance Is the Cornerstone of Legitimate AI Licensing:

Speakers:

Dr. Moiya McTier Human Artistry Campaign

Ryan Lehnning, Assistant General Counsel, International at SoundExchange

The Chatbot

Moderator Chris Castle, Artist Rights Institute

10:10–10:30 a.m. – Briefing: Current AI Litigation, Kevin Madigan, Senior Vice President, Policy and Government Affairs, Copyright Alliance

10:30–11:30 a.m. – Topic 2: Ask the AI: Can Integrity and Innovation Survive Without Artist Consent?

Speakers:

Erin McAnally, Executive Director, Songwriters of North America

Dr. Richard James Burgess, CEO A2IM

Dr. David C. Lowery, Terry College of Business, University of Georgia.

Moderator: Linda Bloss Baum, Director Business and Entertainment Program, Kogod School of Business

11:40–12:00 p.m. – Briefing: US and International AI Legislation

🎟️ Admission:

Free and open to the public. Registration required at Eventbrite. Seating is limited.

🔗 Stay Updated:

Watch Eventbrite, this space and visit ArtistRightsInstitute.org for updates and speaker announcements.

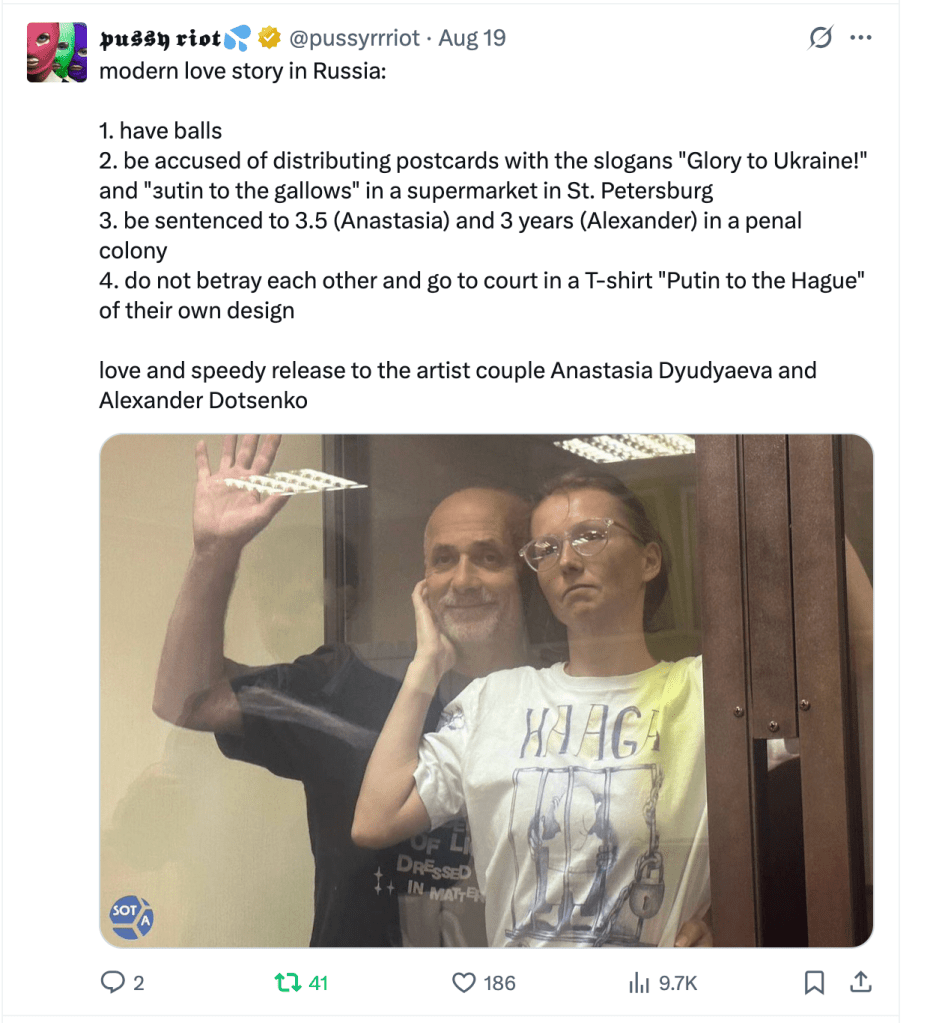

хулиган: Love to Anastasia Dyudyaeva and Alexander Dotsenko

In July 2024, a military court in Saint Petersburg convicted Russian artists Anastasia Dyudyaeva and her husband Alexander Dotsenko on charges of “public calls for terrorism” after they placed anti-war messages—some in Ukrainian, one reading “Putin to the gallows”—on napkins or postcards in a Lenta supermarket. Dyudyaeva received a 3½-year sentence; Dotsenko, three years. They denied wrongdoing, asserting their creative expression was mischaracterized. Their home, which had hosted anti-war exhibitions, was searched, and they were added to Russia’s registry of “terrorists and extremists.”

@ArtistRights Newsletter 8/18/25: From Jimmy Lai’s show trial in Hong Kong to the redesignation fight over the Mechanical Licensing Collective, this week’s stories spotlight artist rights, ticketing reform, AI scraping, and SoundExchange’s battle with SiriusXM.

Save the Date! September 18 Artist Rights Roundtable in Washington produced by Artist Rights Institute/American University Kogod Business & Entertainment Program. Details at this link!

Artist Rights

JIMMY LAI’S ORDEAL: A SHOW TRIAL THAT SHOULD SHAME THE WORLD (MusicTechPolicy/Chris Castle)

Redesignation of the Mechanical Licensing Collective

Ex Parte Review of the MLC by the Digital Licensee Coordinator

Ticketing

StubHub Updates IPO Filing Showing Growing Losses Despite Revenue Gain (MusicBusinessWorldwide/Mandy Dalugdug)

Lewis Capaldi Concert Becomes Latest Ground Zero for Ticket Scalpers (Digital Music News/Ashley King)

Who’s Really Fighting for Fans? Chris Castle’s Comment in the DOJ/FTC Ticketing Consultation (Artist Rights Watch)

Artificial Intelligence

MUSIC PUBLISHERS ALLEGE ANTHROPIC USED BITTORRENT TO PIRATE COPYRIGHTED LYRICS(MusicBusinessWorldwide/Daniel Tencer)

AI Weather Image Piracy Puts Storm Chasers, All Americans at Risk (Washington Times/Brandon Clemen)

TikTok After Xi’s Qiushi Article: Why China’s Security Laws Are the Whole Ballgame (MusicTechSolutions/Chris Castle)

Reddit Will Block the Internet Archive (to stop AI scraping) (The Verge/Jay Peters)

SHILLING LIKE IT’S 1999: ARS, ANTHROPIC, AND THE INTERNET OF OTHER PEOPLE’S THINGS(MusicTechPolicy/Chris Castle)

SoundExchange v. SiriusXM

SOUNDEXCHANGE SLAMS JUDGE’S RULING IN SIRIUSXM CASE AS ‘ENTIRELY WRONG ON THE LAW’(MusicBusinessWorldwide/Mandy Dalugdug)

PINKERTONS REDUX: ANTI-LABOR NEW YORK COURT ATTEMPTS TO CUT OFF LITIGATION BY SOUNDEXCHANGE AGAINST SIRIUS/PANDORA (MusicTechPolicy/Chris Castle)

You must be logged in to post a comment.