Something is moving in Washington.

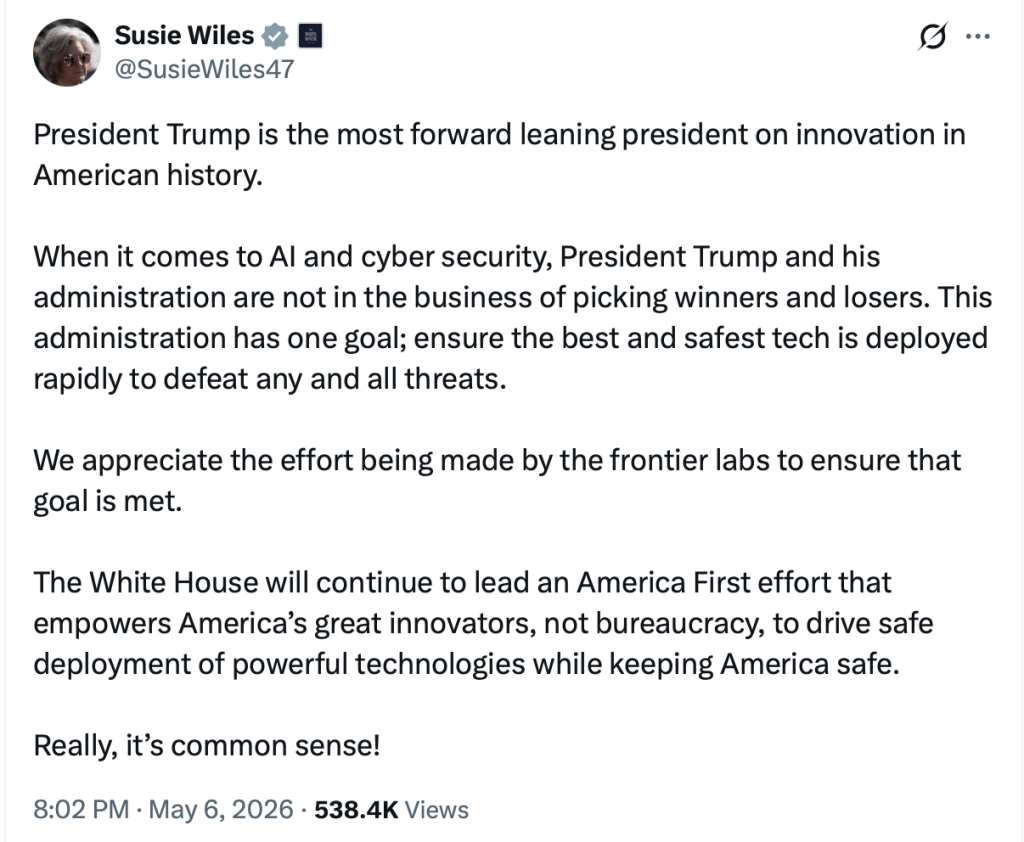

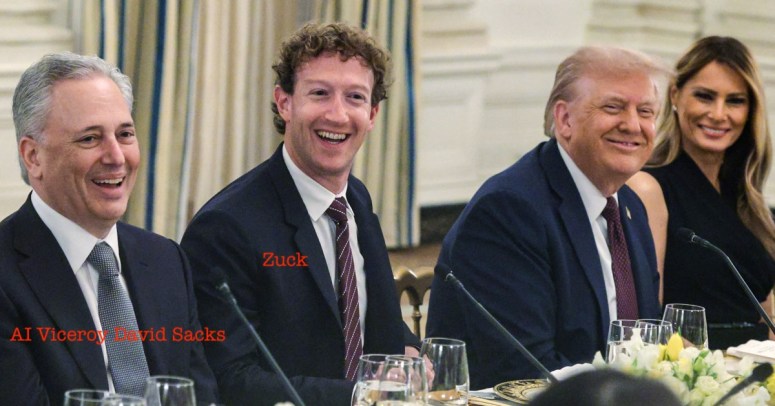

A recent report suggests that the Trump administration is considering a new executive order on artificial intelligence. On its face, that might sound like more of the same—another round of AI policy chatter promoted by David Sacks, the Silicon Valley lobbyist and billionaire investor who has been pushing a “don’t slow it down” approach.

But this time feels different.

Sacks appears to have gotten pushed out at least a bit. Don’t count the chickens just yet. But the shift in tone matters. And the timing matters even more. The order is reportedly being weighed ahead of Trump’s visit to China, where AI development has become a central axis of geopolitical competition.

That context changes the story. For the past several years, the dominant policy posture around AI has been simple: don’t slow down innovation. Because China.

That argument has been doing a lot of work. It has been used to wave away concerns about training data, to discourage state and local oversight of data center buildouts, and to greenlight massive infrastructure commitments—including dedicated nuclear power for AI campuses run by Google, Microsoft, Meta, and Amazon.

In other words: build the machine first. Deal with the consequences later.

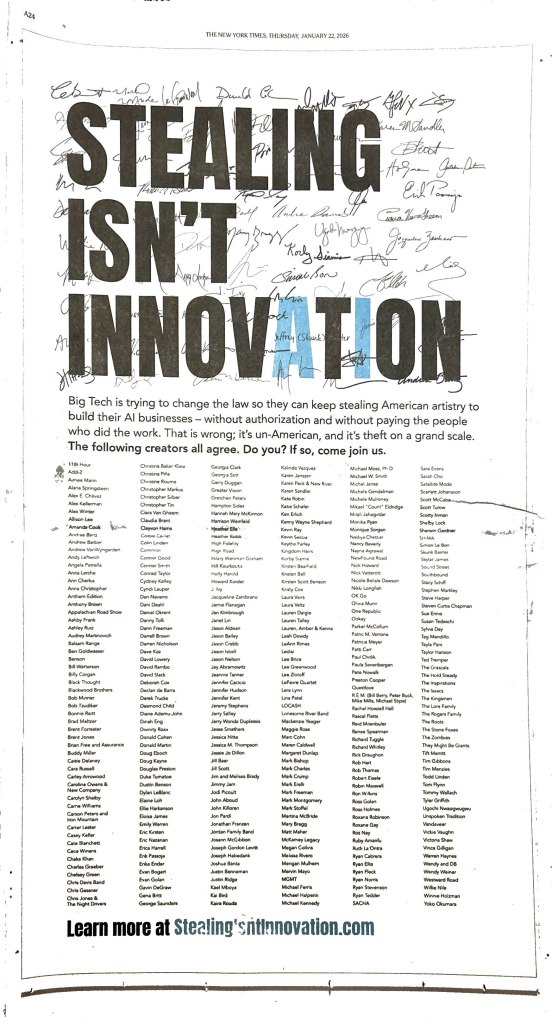

Artists have been the raw material for that strategy.

Musicians, book authors, visual artists—these are not just inputs. They are the training ground for systems that are now capable of producing substitutive outputs that overwhelm creators and flood markets. And until now, the White House policy conversation has largely treated that massive theft as an acceptable cost of staying ahead led by David Sacks, R Street Institute and the hyperscalers.

What makes this potential executive order interesting is that it suggests a shift away from that posture. If the administration is preparing to meet with China on AI, it has an incentive to show that the United States takes control, governance, and strategic resources seriously. And in this context, creative works start to look less like “free fuel” and more like national assets.

That may matter for artists.

Because once you recognize that AI systems derive value from the signals embedded in creative works—voice, tone, style, expression—you start to see those works differently. They are not just content. They are repositories of identity and cultural value.

And they are being extracted at scale.

A more protective policy framework—whether it focuses on model review, training data standards, or provenance—creates an opening. It creates space for the idea that artists are not just upstream contributors, but stakeholders whose work underpins the entire system.

This doesn’t mean the executive order, if it comes, will solve the problem. It won’t.

But it could mark an inflection point.

If policymakers begin to treat AI not just as a technology race but as a resource competition, then the role of creators becomes harder to ignore. You can’t claim to lead in AI while simultaneously disregarding the human material that makes those systems work.

That contradiction is starting to surface. The industry allowed artists and even copyright itself to be lumped in with zoning boards as “bureaucracy” which in turn allowed David Sacks and his ilk to try to create an alternate universe where “innovation” ran wild to “beat China” while also selling chips to China out the back door.

For artists, the takeaway is simple: pay attention to the shift in tone. Policy signals often precede legal ones. What gets framed as a national priority today can become a regulatory framework tomorrow.

For the first time in a while, there are signs that the conversation may be moving—however slightly—toward recognizing the value that artists bring to the AI ecosystem.

Sacks may not be gone. Silicon Valley rarely loses outright, just look at the MLC. But even a partial shift away from the “move fast and ingest everything” playbook is meaningful.

Because for artists, the question has never been whether AI will be built.

The question is whether it will be built on you or with you.

You must be logged in to post a comment.